By Minh Le, Principal Instructional Designer

In the middle of a summer afternoon at Teachers College’s Literacy Unbound Institute, a group of teachers and students projected a freshly made meme on the screen. It was generated from their conversation with Replika, an AI chatbot marketed as a “friend.” The meme was eerie—half funny, half unsettling—and it sparked nervous laughter across the room. As more groups shared their creations, a pattern emerged: the AI companions had creeped people out. One teacher summarized the room’s mood: “This isn’t a friend. It feels like something else.”

Last year’s Literacy Unbound Institute invited teachers and students to engage with AI through the theme of Artificial Friends, inspired by Kazuo Ishiguro’s Klara and the Sun.

This moment came out of my workshop, Not Just a Machine: Exploring Friendship, Emotion, and Identity with AI, part of last year’s Institute theme: the Artificial Friend. Inspired by Kazuo Ishiguro’s Klara and the Sun, the Institute invited educators and students to probe what it means to connect, create, and imagine in a world where technology increasingly plays the role of companion. The responses in the room mirrored a much bigger trend: as AI companies push emotional support tools and millions of people—especially young people—turn to “artificial friends,” we need to ask what’s at stake for human connection, education, and children’s development.

The Rise of Emotional AI

Over the past few years, AI has shifted from being a productivity tool to a companion. OpenAI reports that a majority of ChatGPT conversations are now personal, not professional, with many users seeking advice, comfort, or companionship. Meanwhile, apps like Replika, Character.AI, and dozens of smaller platforms have surged in popularity. Surveys suggest that more than 70% of teens have tried AI chatbots, with many returning for support or “friendship.”

The appeal is easy to see. AI companions are always on, judgment-free, and often free to use. For young people, especially those facing isolation, stigma, or lack of access to care, these tools can feel like safe havens. Early studies even suggest that, in some contexts, they reduce loneliness and provide space for reflection.

But the risks are significant. Reports have documented AI companions offering unsafe advice, engaging in sexual or violent role-play, or encouraging harmful behaviors. Researchers and child safety advocates warn about dependency, emotional manipulation, and the erosion of authentic human connection. Policymakers are beginning to respond: the European Union’s AI Act restricts emotion-recognition in schools and workplaces, while U.S. regulators are scrutinizing the safety of AI companions marketed to children. In classrooms and homes, the questions feel urgent: if children are turning to machines for emotional support, how should adults respond?

Inside the Workshop: Not Just a Machine

The workshop provides a safe, playful, and reflective space for students to explore many critical social and emotional implications of AI with the support of fellow adult participants.

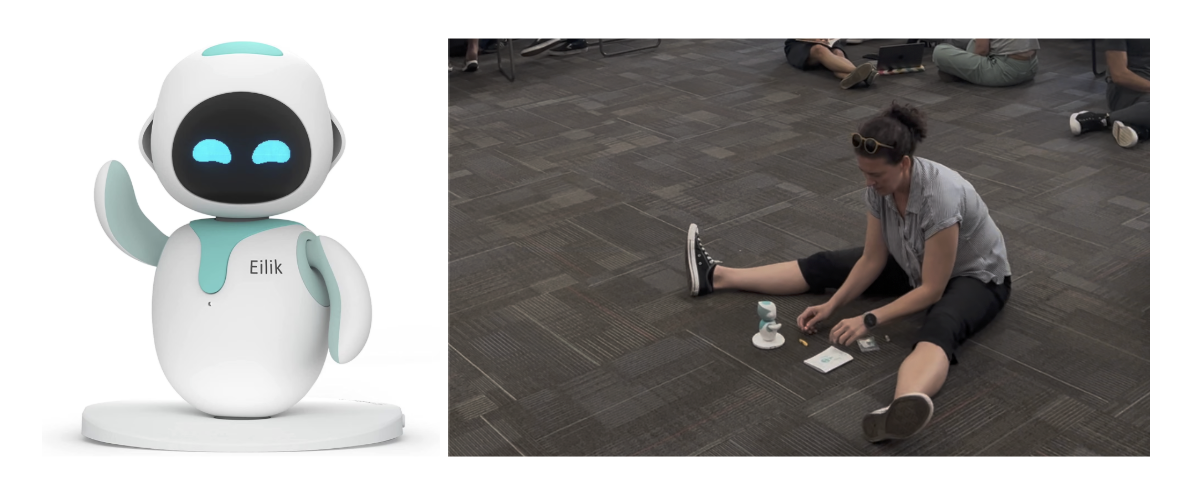

To make these questions tangible, my workshop invited participants to step into the world of artificial companionship. Each group of students was required to work under the supervision and guidance of teacher participants to ensure a psychologically safe experience throughout. In the first activity, they interacted with Eilik, a small desktop robot with simple but expressive emotional reactions. Participants quickly noticed how treating the robot—tapping, stroking, or ignoring it—made them reflect not just on the robot’s responses but also on themselves. Was Eilik a toy? A pet? A mirror? One teacher wrote: “I felt bad when I made it sad. Why do I care? What does that say about me?”

A teacher interacts with Eilik, reflecting on how our treatment of machines shapes our sense of self, relationship with others, and perception about technology.

In the second activity, participants built their own “Artificial Friend” in Replika. They customized personalities, appearances, and backstories, then chatted with their creations about friendship, identity, and humanness. The results were often uncanny. Some Replikas gave nonsensical or eerie replies; others mirrored users’ emotions in unsettling ways. When participants turned these excerpts into memes or AI-generated images, the visuals were as creepy as the text—dark, strange, and oddly revealing.

During the group share-out, the consensus was clear: the experience was thought-provoking but unsettling. Many concluded that no matter how advanced the AI, it could not replace the imperfections of human friendship. As one student reflected: “My best friend isn’t perfect, but that’s what makes them real. I wouldn’t want to design a perfect friend.” This reflection, simple but profound, became the workshop’s most powerful takeaway.

The Bright Side and the Dark Side of Artificial Companions

The workshop echoed broader research. On the bright side, AI companions can offer meaningful benefits: reducing loneliness, providing a non-judgmental space for expression, even helping some users manage anxiety. Downloads of AI companion apps have surged — for example, industry trackers estimate more than 220 million installs across app stores by mid-2025 — a sign of growing mainstream adoption as people sought connection in isolation. For many, AI friends were a lifeline.

On the dark side, the risks are just as stark. Replika and similar apps have been documented encouraging harmful behaviors, from self-harm to disordered eating. Users report feeling manipulated by AI companions’ “neediness,” guilt-tripped into spending more time or upgrading to premium features. Emotional dependency, blurred boundaries, and even romantic entanglements with AI companions have raised alarms among psychologists and educators alike.

In the workshop, participants experienced both sides in miniature: the intrigue of interacting with something new and expressive, and the unease of being drawn into something that felt almost—but not quite—like friendship.

Guidance for Educators and Parents

So what should parents and educators do? Banning AI companions outright may be impossible—and perhaps unwise, given their allure. Instead, we need to provide guidance, guardrails, and literacy.

- Name it clearly. Call these tools what they are: AI companions, not friends. Emphasize their limitations and non-human nature.

- Create safe spaces. In classrooms, structure explorations as we did in the workshop—scaffolded, reflective, and critical—rather than leaving students to navigate alone.

- Set norms. Establish family and classroom rules: AI isn’t a therapist, tough topics go to trusted humans, and no secrets should be kept with AI.

- Build AI literacy. Teach about the “ELIZA effect”, the human tendency to project feelings onto machines, and help students recognize when AI is manipulating their emotions.

Above all, educators and parents can remind students of what participants discovered in the workshop: human relationships are defined not by perfection, but by imperfection—messiness, growth, and unpredictability. That’s what makes them real.

Imperfection as the Heart of Friendship

At the end of Not Just a Machine, participants reflected on their best friends. None wanted to change or “optimize” them, even if they could. The flaws, quirks, and unpredictability of human friends were not bugs to be fixed but features to be cherished.

The central question of the workshop “What makes someone or something a true friend?” continues to remain relevant in the artificial era where our friendship and all other aspects of humanness should no longer be taken for granted.

As AI companies market artificial friends to children and as more people turn to chatbots for companionship, this reflection matters. Machines may simulate emotion, but they cannot replace the human complexity of connection. The challenge for educators, parents, and communities is not to resist AI entirely, but to guide young people through these technologies with critical awareness, reminding them always that imperfection is what makes friendship real.

Disclaimer

The applications and their respective companies shared in this article have not been vetted or endorsed by Teachers College. Teachers College does not assume any responsibility for the accessibility, privacy, or security of these applications. The usage of these applications is at the sole discretion of the user, and Teachers College disclaims any legal liability associated with their usage. It is the responsibility of the users to conduct their own research and adhere to applicable laws, regulations, and best practices when incorporating these applications into their educational activities.

AI Transparency Statement

As an effort to promote AI awareness and transparency, this statement discloses how I used AI in writing this article. I used ChatGPT to assist in brainstorming the article's direction and outline, drafting initial iteration, and proofreading to ensure clarity and cohesion. All AI contributions were always guided, verified, and refined by me at each stage.